Shall We Play a Coordination Game?

“The seasons change; and both of us lose our harvests for want of mutual confidence and security.” – David Hume, A Treatise on Human Nature

As I expounded before, security should be treated as a product – as “something created through a process that provides benefits” to the organization. Every product has a purpose, something it is trying to help its users accomplish. If security is a product, what is its purpose? What is it trying to help its users – the organization – accomplish? Without this purpose, security can become aimless – falling into the wretched trap of “security for its own sake.”

If we treat security instead as a business enabler, what results? In many tech organizations, the most critical business enabler is software delivery performance. Therefore, security should cooperate with relevant stakeholders who focus on software delivery performance, especially the engineering organization (colloquially known as the DevOps function).

As is well-discussed in the industry, the relationship between security and DevOps is typically described as fraught, icy, or adversarial – a far cry from cooperative, let alone collaborative. There are cultural reasons for this, but I will not be covering them in this post. Instead, I am going to draw on behavioral economics, looking both to cooperation games within game theory and the concept of moral hazard as a lens through which we can better understand the security and DevOps relationship.

So, shall we play a coordination game? Let’s dive in.

Barriers to Cooperation

Cooperation Games

Most relationships in life can be considered through the concept of “games,” behavioral relationships between decision-makers involving certain rules or conditions. It is worth exploring the different potential attributes of games as a backdrop for how to think about the game infosec plays with its DevOps peers.

Games can involve cooperation or non-cooperation. Non-cooperative games involve competition between the game’s players, without any sort of external authority to enforce cooperation between the players – resulting in no chance for alliance. The game between attackers and defenders can be considered a non-cooperative game. Cooperative games, unsurprisingly, involve cooperation rather than competition. Players can form coalitions to coordinate their strategies and share potential payoffs1. One of the more famous cooperation games is the Prisoner’s Dilemma, a non-zero-sum cooperation game in which two prisoners must make the decision to confess or stay silent.

What is a non-zero-sum game? In zero sum games, the total payoffs for all players in the game add up to zero. That is, one player’s gain will equal the other player’s loss exactly. Few real-world scenarios involve zero-sum games, but the game poker is an example of one. Non-zero-sum games are thus games in which the total payoffs for all players do not add up to zero – that the gain by one player does not result in an equivalent loss by the other player. Free-trade is an example of a non-zero-sum game in which all players can benefit in a win-win scenario. The aforementioned Prisoner’s Dilemma is non-zero-sum, as it can result in a win-win or lose-lose scenario.

Information is an essential component of every game, as information is at the heart of strategic interaction – particularly information regarding other player’s decision-making in the game. In perfect information games, all players know all decisions previously made by the other players. As you might suspect, real-life rarely allows such omniscience, outside of games like tic-tac-toe or chess. Imperfect information is common to our existence, wherein players cannot see all prior decision-making by the other players within the game.

Complete and incomplete information is another informational characteristic of games. In a game with complete information, players understand the potential payoffs, risk tolerances, strategies, and player “types” among other players. Again, complete information is largely unrealistic in the real-world. Instead, games with incomplete information are most common in human interaction, wherein players cannot discern other players’ preferences, motivations, and other strategic information.

Although it may scandalize true game theorists, for perspicuity’s sake, I will summarize these information-based characteristics into the concept of information asymmetry – that players possess relevant information to which the other players do not have access. Typically, information asymmetry is analyzed through the lens of transactions, though I argue it all comes back to decision-making between relevant players.

I believe one can view DevOps and infosec’s relationship as a coordination game with information asymmetry. I do not believe it is a non-cooperation game, as there is ample room for infosec and DevOps to form a coalition, and there is potential for the organization to serve as an external enforcer of cooperation. There is also information asymmetry, as neither DevOps nor infosec has perfect insight into the other’s decision-making process nor access to all relevant information (much of which might be tacit and considered tribal knowledge).

If we proceed with the assumption that DevOps and infosec’s relationship is a coordination game, then our goal is to understand how to encourage cooperation to maximize the collective payoff. This results in a crucial question: what leads to coordination failures? One impetus is strategic uncertainty among players, that they do not realize their objectives are aligned. Perhaps obviously, misalignment of objectives can also present friction in cooperation games. Thus, mechanisms are needed either to facilitate cooperation – in the event of aligned preferences – or enforce cooperation – in the event of less aligned preferences.

Interestingly, empirical evidence suggests that humans are far more cooperative by nature than traditional game theory predicts. In fact, the wealth of data points showing cooperation within games far exceeding predicted values has led scholars to delve into the nature of cooperation, seeking an answer to why this pattern holds. There is a split among those who seek to prove that cooperation still fits within the confines of rational behavior, and those who argue that cooperation represents more of a strategic irrationality – the philosophical nuance of which I will not cover here.

Despite humans being cooperative by nature, there are potential wrinkles that can come when decision-making power and information possession are unequal – known as moral hazard, which we will explore presently.

Moral Hazard

Moral hazard results when someone increases their risk exposure because they are protected from risk impact in some way. It is related to the principal-agent problem, in which someone (the agent) can make decisions on behalf of another person (the principal), leading to the potential for self-interested actions by the agent that are not in the interests of their principal. Information asymmetry exacerbates moral hazard issues, since one party possessing information the other party cannot access creates an incentive for exploitation.

A tangible example of moral hazard is in insurance. If you received an insurance policy with full coverage for all potential losses due to security incidents, theory suggests you will have less of an incentive to invest in a strong security program. The insurance provider may assume you will maintain your current level of protection, but you possess information secret to them – that you have no intention of doing so. Thus, you are willing to accept more risk because you are protected from the risk impact, potentially empowering you to pursue a #yolosec strategy2.

Looking to infosec and DevOps, there is the potential for moral hazard given the team structure at most organizations. DevOps can increase their exposure to security-related risk because they are not held accountable for it, making the infosec team bear the risk impact. DevOps can likewise possess hidden motivations or take hidden action from the vantage point of the security team, as not all efforts to secure systems will be observable by infosec. This makes it difficult for infosec to manage the organization’s risk exposure – and thus the ability to manage the risk impact they experience.

I believe moral hazard goes both ways in this relationship, however. Infosec can also increase risk exposure for the rest of the organization – but risk of a different kind. Part of infosec’s infamy is due to creating policies or implementing tools that add risk to their colleagues’ workflows, such as a salesperson wrestling with a clunky VPN when trying to close a deal with a customer. Because infosec is shielded from the risk resulting from friction to their organizational peers’ workflows, they are more willing to add such risk in the name of their own priority: security.

Moral hazard is particularly relevant when considering conflicting goals. Very recent research suggests that decision-makers resolve goal conflicts on behalf of others differently than they would for themselves, potentially resulting in undesirable outcomes for the recipients of the decision. But, first, what defines goal conflict? It is when achieving one goal prohibits or discourages the achievement of another goal. Unless there is the option to satisfy both goals (more on this later), people will only “activate” the higher priority goal.

One study examined moral hazard and goal conflict through the lens of health practitioners choosing between curative care or palliative care for their patients3. Health practitioners typically prioritize the goal of curative care over palliative care, believing the two are in conflict. What makes this context interesting is widespread evidence that these two goals are not actually in conflict. Palliative care can extend lifespan; but at the very least, it is not shown to interfere with curative care.

Why do health practitioners believe these goals are in conflict, and why do they opt for curative care rather than improving patient comfort and pain reduction? The problem lies in moral hazard – that people focus on potential gains and perceive fewer risks when making decisions for others rather than themselves4, and these choices tend to be more creative and idealistic. People can also justify these choices by telling themselves that there are fewer tradeoffs than there are – pretending that it is an easier decision to make.

Another reason behind this errant goal conflict resolution lies in identity. Healthcare providers take pride in being able to solve medical problems, and curative care reinforces that identity. Palliative care, however, can be threatening to this identity, perceived as a form of “giving up.”5 Infosec professionals also take pride in their ability to stop threats and to solve security problems, and accepting risk or compromising can be likewise seen as giving up.

The process by which people accomplish multiple goals can also present challenges in an organizational context. Goals can either be pursued sequentially or concurrently. Sequential goal pursuit allocates resources to one goal at a time, only switching attention to an alternative goal once sufficient progress is perceived. Concurrent goal pursuit directs attention to more than one goal at a time, determining a single choice that can satisfy multiple goals (the “killing two birds with one stone” strategy).

There are benefits of each strategy along the spectrum of sequential to concurrent goal pursuit. “Goal shielding” is the primary mechanism of sequential goal pursuit, wherein alternative goals are inhibited to avoid interfering with pursuit of the primary goal – and it has been found to increase successful goal progress6. The multifinality principle is the primary mechanism of concurrent goal pursuit, wherein a single means is used to satisfy multiple goals – and it has been found to boost efficiency of goal achievement7.

There are downsides to each end of the spectrum, as well. Sequential goal pursuit means that accomplishing multiple goals can take more time. It can also lead to a false sense of security, as seen in a weight management scenario. In one study, those who believed they had made meaningful progress towards their weight goal were more likely to dig into unhealthy food than those who felt that their progress was wanting8. Sequential pursuit also allows the excuse of “I’ll do it later,” when “later” never seems to materialize.

Most with experience in information security have assuredly seen examples of the “I’ll do it later” issue, that certain goals are never achieved and continually pushed off. The false sense of security in the weight management case also manifests in infosec in a few ways, but primarily through the seductive delusion that a series of solid security choices means that security concerns can be ignored for a time, or that enough progress was made on security while ignoring the need for continuous maintenance (let alone improvement).

Concurrent goal pursuit requires a readily-available solution capable of satisfying multiple goals at the same time, which is not always possible. What’s more, these magical solutions end up being discounted due to their versatility. For instance, when people were asked to think of three goals a computer serves in a study, they perceived that the computer was less important to accomplishing those goals as when they were asked to think of only one goal a computer serves9. While a computer is seen as essential for the sole task of checking email, it is perceived as less critical for checking email when considered alongside the goals of using a search engine or browsing an online publication. Thus, concurrent goal pursuit can save time, but can potentially lead to the feeling of less being accomplished.

This curious effect, known as the “dilution of instrumentality,” is considered decidedly irrational, entirely based on people’s mental models rather than objective evidence of how a course of action can serve multiple goals. I believe this is commonly seen in infosec when evaluating solutions that can help both infosec and DevOps – that any course of action or means to an end that can solve use cases on both sides must not be a very good or powerful one.

Of course, in the context of enterprise security, there is not just one individual pursuing goals. Let us now dive into the concept of team reasoning to better understand the nature of collaborative goal pursuit.

Team Reasoning

Team reasoning is an emerging theory10 that allows for a group of players within a game to represent a single player, postulating that team members aim to achieve common interests, rather than fulfilling their individual self-interest. As Sugden, one of the parents of the theory, would say, team reasoning represents “intentional cooperation for mutual benefit.” It is commonly referred to as “we thinking” or “joint intentionality” in the literature, and is considered a conceptual kin to “collective intentionality” from the realm of philosophy.11

There is ample experimental evidence suggesting that team reasoning serves as a more accurate theoretical framework than traditional game theory. For example, one study conducted five game types and found that across all games, a majority of players eschewed the individually rational strategy and opted instead for the collectively rational strategy – the one that maximized the group’s payoff12. An earlier study demonstrated that players specifically identified “focal points” – targets that naturally stand out – that facilitated coordination13.

An intuitive reason for cooperation is that we derive value from helping those with whom we identify. On the flip side, if we view the players in a cooperative game as “the others,” we are less likely to cooperate. An imperative ingredient to foster collaboration is encouraging the shift from an individual mindset to a group mindset – to consider what the group’s goals are and what part the individual should play in achieving those goals14. Naturally, the path to this shift to a collective perspective is not so simple as telling the individual to start thinking in that way.

One method to improve this so-called group identification is by making it salient – the behavioral economics term for making a piece of data more readily accessible and more prominent. For instance, I could encourage group identification among blonde people by telling an all-blonde group that I am conducting an experiment to compare brunettes versus blondes.

Regrettably, from what I have witnessed regarding infosec and DevOps’ relationship (or lack thereof), there is almost a point of pride among each group for being distinct. I certainly do not believe that increasing salience of group identification between the two will fix tension among infosec and DevOps – but it does seem like an important step worth attempting. In any projects or important decision-making meetings, increasing salience by emphasizing that you, collectively, are the core of the organization’s technology group – party members on the imperative quest of ensuring safe software delivery performance – can perhaps remind everyone more of the collective similarities rather than differences. Even more compellingly, this effect can be magnified if you emphasize the true “other.”

Emphasizing potential negative outcomes due to a group of “others” dissimilar from your group (as a compliment to espousing the potential positive outcomes due to group collaboration) offers potent results, backed by experimental evidence.15 This is known as “perceived interdependence,” achieved by framing the situation as one in which the group needs each other in the face of dissimilar “others” who might seek to harm them.

Luckily, it is not a stretch to present organizational defense in this light. Relative to attackers, infosec should lie squarely in the “in-group” for DevOps – despite their perceived antagonistic qualities otherwise. With that said, there may be perception that security is a more harrowing threat to DevOps than attackers, particularly if DevOps was relatively shielded from incidents in the past, because security stymies their work far more acutely. Hence, I believe attempting group salience alone to fix coordination issues is likely to product mixed results in the vexed realm of infosec and DevOps relations.

Leveraging salience in other ways may prove more fruitful, however. Experimental evidence indicates that making the joint goal salient makes team reasoning more likely to occur16. Unfortunately, in many games, payoffs are asymmetric, meaning one player or group receives a greater reward for success than their co-player or co-group. Such asymmetry can result in miscoordination, because players find the payoff factor more salient and begin to act more competitively. For instance, in one experiment, games with symmetric payoffs resulted in a 93% cooperation rate, while games with even small asymmetric payoffs dropped to a 49% coordination rate17.

At most organizations, payoffs for infosec and DevOps will be largely unknown, as most compensation information is private. However, perceived payoffs for success might result in greater miscoordination – and it will most likely take the form of infosec feeling less rewarded than DevOps, if I were to hazard a guess. These rewards are not necessarily monetary, either. The disparity in payoffs between DevOps and infosec is perhaps highest in social rewards, namely social recognition.

One all too common scenario is that infosec may insist on a fix of a critical vulnerability shortly before an anticipated release, requiring last minute scrambling by DevOps to unblock the release. DevOps may be rewarded for these efforts, which might have been avoided by fixing the vulnerability when it was first discovered by the infosec team, rather than ignoring or denying it. Infosec, however, is more likely to be chided than noted for their contributions.

A potential counter is to publicly state equal rewards for certain joint goals, such as mean time to remediation or, more contentiously, fewer vulnerabilities introduced into production, so that this data point regarding payoffs becomes most salient. Again, these rewards do not have to be monetary, but should involve joint recognition at an organizational level. With that said, emphasizing “label salience” – accentuating group salience, as discussed before – may also serve as a deterrence from payoff salience, so experimentation is recommended.

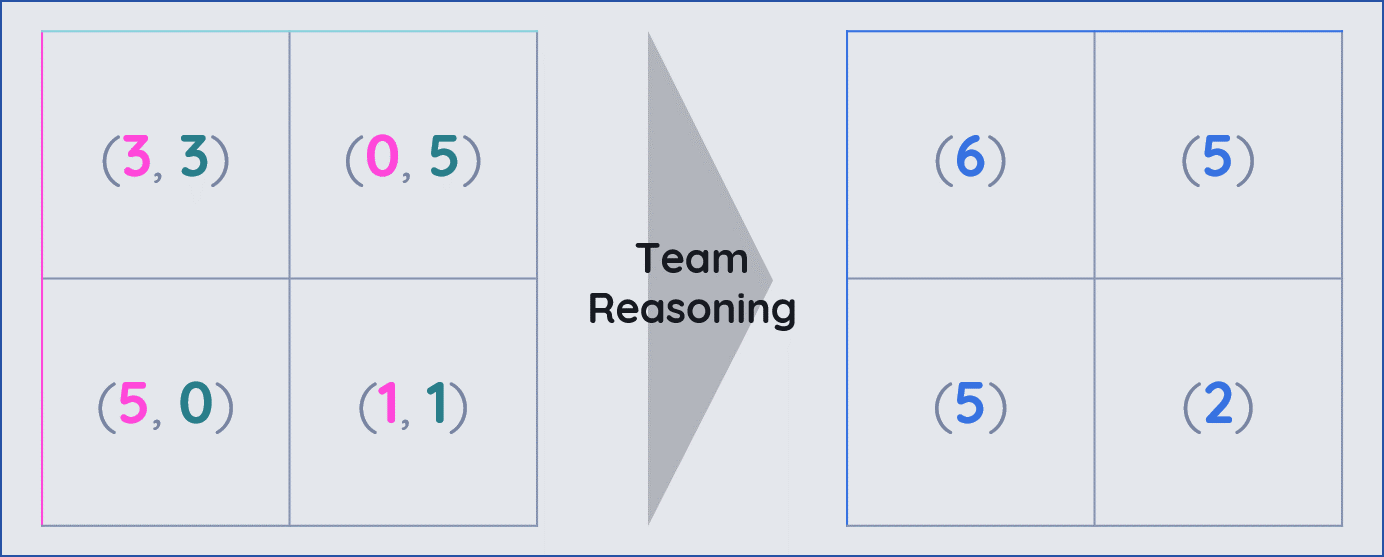

When emphasizing joint payoffs, the payoff matrix for a game changes, as shown below. The new, “we-thinking” payoff matrix illustrates why the theory of team reasoning can still easily support the notion of rationality, as it makes perfect sense for a player to choose a payoff-maximizing strategy:

By visualizing team reasoning through this payoff matrix, its value in promoting coordination becomes palpable. This serves as the perfect starting point for contemplating how we can win the coordination game.

Facilitating Cooperation

In the course of my research, I did not encounter a single organization where DevOps and infosec codified guidelines for how they should interact – or even documented their collective objectives. As I will discuss in this section, choosing the right goals and publicizing them in the right way is essential to facilitate cooperation.

Team Reasoning

One notable and highly relevant experiment involves the centipede game, from Lambsdorff, et al.18 The centipede game is a multi-stage trust game in which players alternate offers. For instance, in the first round, I could receive $10 while you receive $2, or I could pass the choice over to you to double each amount – giving you the choice of receiving $20 and me receiving $4, or passing it back to me to double the amounts again. This game structure is useful for exploring the reciprocal nature of cooperation, as the subgame perfect Nash equilibrium (i.e. what the rational player “should” play) is for the players to never pass.

The empirical evidence starkly differs from the theoretically rational strategy, with a majority of players passing in the first stage, and some even ending the game without anyone taking vs. passing. The results are even more elucidating when compared across the treatments the experimenters created. In one treatment, the game’s payoffs were presented in probabilistic terms, showing the probability of achieving a joint goal rather than individual payoffs. In the other treatment, the game was presented through a soccer analogy, where passing offers was seen through the lens of passing the ball to score a goal.

In games where the number of passes could lie between 0 or 2 for each player, the average number of passes in the probabilistic treatment was approximately 1.5, and for the soccer treatment approximately 1.9 passes. Both averages are substantially higher than the average for the vanilla version of the centipede game – approximately 1.2 passes – and, strikingly, much higher than the perfect Nash equilibrium of 0 passes. Why did these alternative game treatments succeed? The probabilistic treatment was successful in promoting cooperation due to emphasis on the shared goal, removing the salience of the payoff asymmetry (as discussed in the prior section).

What led the soccer treatment to be most effective? The researchers believe it is because the soccer analogy made the joint goal obvious, thereby making the team identity salient. This not only is supportive of the theory of team reasoning, but also perhaps supports the practice common among the stereotypical former-jock sales leaders of using sportsball analogies to foster team spirit and motivation. While I remain somewhat skeptical of the efficacy of sports analogies on engineers, I believe similar levels of salience for group identity can be accomplished and provide similar results in improving cooperation.

What else can these results suggest for the relationship between DevOps and infosec? One is that controls targeting individuals for infractions – for instance, punishing individual developers for security bugs – may be less effective than sharing security-enhancing goals at a group level. Further, emphasizing differences between groups is less effective in improving conflict resolution than emphasizing joint goals. This perhaps is where infosec culture fails the most – presenting a draconian notion of infosec vs. normies and declaring that infosec is a misunderstood, maligned clique that is the gatekeeper of secure practices.

Outcome & Process Goals

Another set of tools for our conceptual arsenal is found in outcome and process goals. Outcome accountability prioritizes people getting things right. Process accountability prioritizes people thinking in the right way. I am a huge proponent for focusing on outcomes rather than outputs, particularly when it comes to measuring success – because lots of aimless creation is not useful, but impactful creation is. Nevertheless, there are caveats to outcome accountability, regarding what it incentivizes, that are necessary to explore before people jump on the outcome bandwagon and fully abandon process accountability.

Outcome accountability is beneficial in incentivizing realization of expected outcomes when making decisions. There is experimental evidence showing that outcome accountability boosts performance relative to process accountability alone.19 However, this can ignore analysis of whether the decision itself was appropriate or not. The potential hazards of outcome accountability include the worsening of overall decision quality, increasing commitment to sunk costs, diminishing of complex thinking, impairment of attentiveness, and reduction of situational awareness20.

In short-term decision-making problems, outcome accountability can lead to analysis paralysis due to uncertainty, or succumbing to cognitive bias, thereby worsening decision quality. Process accountability, in contrast, can help temper the temptation of cognitive bias, helping decision-makers navigate choppy cognitive waters short-term. Further, in the aforementioned experiment, process accountability was shown to promote more effective knowledge sharing, boosting performance of those observing the decision-makers’ actions.

Process accountability, although already not as popular in Silicon Valley, has its deficiencies worth mentioning, too. Process accountability can incentivize adherence to policies and best practices, even when they are not the best options in dynamic environments long-term. It is inherently less flexible than outcome accountability, making it difficult to improvise and incorporate feedback loops to inform better strategy in changing environments. For example, process accountability was shown to be far weaker than outcome accountability in augmenting pilots’ skill in navigating changing environments21.

Thus, a hybrid of outcome accountability and process accountability is optimal, balancing out weaknesses while not decreasing the respective benefits of each method. A hybrid of outcome goals and process goals should give teams the ability to remain flexible while ensuring knowledge transfer and inhibiting potential abuses of power. Importantly, this hybrid accountability can ensure that standard practices are met, but also encourage experimentation with novel strategies. This ensures collective knowledge is not discarded and adaptive prowess are not discouraged – both of which are essential components for designing secure systems and responding to incidents.

Creating stretch outcome goals can encourage innovation, allowing teams to stretch their metaphorical wings and attempt ambitious plans – tempered with the need to still be able to justify process. Stretch goals tend to also be more fun, satiating people’s curiosity and desire to learning new things. For example, a security-related stretch goal might be to create a tool to automate asset inventory management, whereas the basic goal may be to perform manual asset inventory management.

Importantly, process goals should apply equally to infosec and to DevOps. Infosec should justify why they made a less business-friendly decision – helping curb the FUD-driven decision-making to which infosec is prone. DevOps should justify why they made a less secure decision – helping curb the tendency to treat security as an afterthought. By forcing each team to articulate their justifications, it allows the opportunity to, as the kids say, call out bullshit.

Goal Framing

Finally, how these goals are communicated is consequential to winning the coordinative game. Drawing from the aforementioned study involving goal conflict between curative or palliative care, there are a few lessons to glean towards how to set goals. First, the study found that participants who received messaging emphasizing the conflicting nature of the goals reported increased perception of goal conflict and decreased importance of palliative care, as expected22.

Second, the researchers anticipated that self-affirmation and self-reflection on values – with the goal of ameliorating cognitive dissonance associated with maintaining conflicting beliefs – would improve providers’ abilities to empathize with patients, thereby increasing the importance of palliative care. Instead, the opposite was shown to be true. Unfortunately, self-affirmation can also deactivate less valued or more difficult goals, becoming a reinforcement mechanism for moral hazard-driven beliefs.

These results certainly seem discouraging! So, what can be done? The key takeaway is that goals must be presented as complementary rather than conflicting, particularly when there is evidence to support it (as is true with the complementary nature of palliative and curative care). When considering infosec and DevOps goals, I have long argued that their respective goals bear far more similarities than differences23. Empirical analysis from the most recent “2019 Accelerate: State of DevOps” report also shows that elite DevOps teams fix security issues earlier and faster than their less performant peers24.

We must also include the ordering of goals in our calculus here. Sequential goal pursuit is inevitable given the relative scarcity of solutions that can accomplish multiple goals, and in light of the tendency for people to prioritize one goal over another. However, concurrent goal pursuit can still be encouraged. DevOps and infosec teams can make a list of their respective goals and perform an exercise to brainstorm where goals from each side might be achieved through the same means. As an example, tools designed to collect data for performance use cases are collecting data valuable for security use cases as well.

To avoid the “dilution of instrumentality” – the perception that the stone is less important in killing the two birds, because it can kill both concurrently – highlight objective information about a choice, tying its importance to each goal directly. For instance, a database monitoring tool can help accomplish both performance and security goals, thus running the risk of invoking the dilution of instrumentality. To re-anchor perception to reality, you can express how the tool relates to each goal specifically: collecting data on resource utilization for performance, and detecting abnormal query behavior for security.

Conclusion

The relationship between DevOps and information security must be healthy for the business to thrive. This relationship, like all relationships, requires work, and understanding it as a cooperative game involving information asymmetry can inform how we can work smarter to nurture it. By leveraging team reasoning, hybrid goals (outcome and process), and framing goals as complementary and concurrent, we become a strong contender for winning this coordinative game.

-

Lui, J. (2008). CSC6480: Introduction to Game Theory: Cooperative Games [PowerPoint slides]. Retrieved from http://www.cse.cuhk.edu.hk/~cslui/CSC6480/cooperative_game.pdf ↩︎

-

The invention of the term #yolosec is, perhaps, one of my crowning achievments within the infosec industry. See my 2017 Black Hat talk for the use of it in context of attack trees (slide 77). ↩︎

-

Ferrer, R. A., Orehek, E., & Padgett, L. S. (2018). Goal conflict when making decisions for others. Journal of Experimental Social Psychology, 78, 93-103. ↩︎

-

Polman, E. (2012). Self–other decision making and loss aversion. Organizational Behavior and Human Decision Processes, 119(2), 141-150. ↩︎

-

CAPC (2011). 2011 public opinion research on palliative care: A report based on research by public opinion strategies. Retrieved from https://media.capc.org/filer_public/18/ab/18ab708c-f835-4380-921d-fbf729702e36/2011-public-opinion-research-on-palliative-care.pdf ↩︎

-

Shah, J. Y., Friedman, R., & Kruglanski, A. W. (2002). Forgetting all else: on the antecedents and consequences of goal shielding. Journal of personality and social psychology, 83(6), 1261. ↩︎

-

Orehek, E., & Vazeou-Nieuwenhuis, A. (2013). Sequential and concurrent strategies of multiple goal pursuit. Review of General Psychology, 17(3), 339-349. ↩︎

-

Fishbach, A., & Dhar, R. (2005). Goals as excuses or guides: The liberating effect of perceived goal progress on choice. Journal of Consumer Research, 32(3), 370-377. ↩︎

-

Zhang, Y., Fishbach, A., & Kruglanski, A. W. (2007). The dilution model: How additional goals undermine the perceived instrumentality of a shared path. Journal of personality and social psychology, 92(3), 389. ↩︎

-

Team reasoning’s roots are with Sugden and Bacharach, both who first released papers in the 1990s on the topic – so it is quite a recent theory on a relative basis. ↩︎

-

Schweikard, D. P., & Schmid, H. B. (2013). Collective intentionality. ↩︎

-

Colman, A. M., Pulford, B. D., & Rose, J. (2008). Collective rationality in interactive decisions: Evidence for team reasoning. Acta psychologica, 128(2), 387-397. ↩︎

-

Mehta, J., Starmer, C., & Sugden, R. (1994). The nature of salience: An experimental investigation of pure coordination games. The American Economic Review, 84(3), 658-673. ↩︎

-

Colman, A. M., & Gold, N. (2018). Team reasoning: Solving the puzzle of coordination. Psychonomic bulletin & review, 25(5), 1770-1783. ↩︎

-

Flippen, A. R., Hornstein, H. A., Siegal, W. E., & Weitzman, E. A. (1996). A comparison of similarity and interdependence as triggers for in-group formation. Personality and Social Psychology Bulletin, 22(9), 882-893. ↩︎

-

One such example is in the study by Lambsdorff, et al. involving the centipede game, mentioned later in this piece. ↩︎

-

van Elten, J., & Penczynski, S. P. (2018). Coordination games with asymmetric payoffs: An experimental study with intra-group communication. Unpublished manuscript available at http://www.penczynski.de/attach/APC.pdf. ↩︎

-

Lambsdorff, J. G., Giamattei, M., Werner, K., & Schubert, M. (2018). Team reasoning—Experimental evidence on cooperation from centipede games. PloS one, 13(11), e0206666. ↩︎

-

Chang, W., Atanasov, P., Patil, S., Mellers, B. A., & Tetlock, P. E. (2017). Accountability and adaptive performance under uncertainty: A long-term view. Judgment & Decision Making, 12(6). ↩︎

-

Scholars would call it reduction of “epistemic motivation.” ↩︎

-

Skitka, L. J., Mosier, K. L., & Burdick, M. (1999). Does automation bias decision-making?. International Journal of Human-Computer Studies, 51(5), 991-1006. ↩︎

-

Ferrer, R. A., Orehek, E., & Padgett, L. S. (2018). Goal conflict when making decisions for others. Journal of Experimental Social Psychology, 78, 93-103. ↩︎

-

Shortridge, K. (2018). Paint by Numbers: Measuring Resilience in Security. Retrieved from /speaking/Paint-by-Numbers-Resilience-in-Infosec-Kelly-Shortridge-AusCERT-2018.pdf ↩︎

-

Forsgren, N., et al. (2019). 2019 Accelerate State of DevOps Report. Retrieved from https://cloud.google.com/blog/products/devops-sre/the-2019-accelerate-state-of-devops-elite-performance-productivity-and-scaling ↩︎